I rather thought of a more large scale approch using GitHub - blechschmidt/massdns: A high-performance DNS stub resolver for bulk lookups and reconnaissance (subdomain enumeration) . I have some twisted ideas to condense large black lists in an automatic way. I will implement it in python the next days.

Cool I had a quick look at the massdns program.

I would be interested to know where some of these come from.

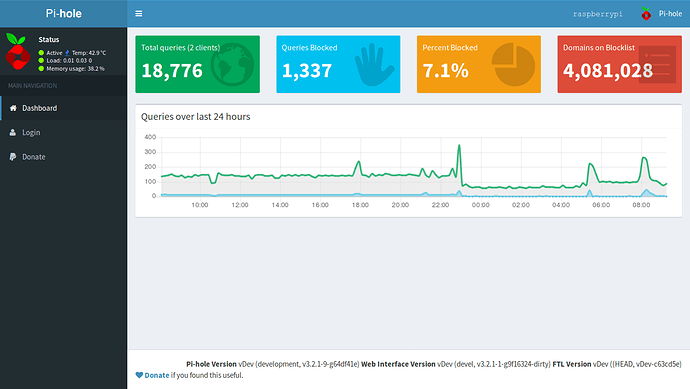

https://smokingwheels.github.io/Pi-hole/fromlog

https://smokingwheels.github.io/Pi-hole/fromlogtosh

You are welcome to collect the bigger list from http://45.63.60.89/hosts

Its one of those disposable clouds if I run low on money.

Lots of the proposed logs are no valid domains, I see...

I will grab the hosts file for evaluation, soon.

Now I am curious too ![]()

But I like KISS too.

Bit crude checking only A records but will have results I hope soon (she is still running in VM):

while read DOMAIN; do (host -t a $DOMAIN 8.8.8.8 1> /dev/null) || echo $DOMAIN | tee -a ~/list.failed; done < /etc/pihole/list.preEventHorizon

Interesting 16% failed having an A record with the default lists.

$ wc -l list.preEventHorizon

106632 list.preEventHorizon

$ wc -l list.failed

17094 list.failedjust of curiosity: how long do you need for the 100k domains? some of the 17k will be aaaa record, ofcourse.

I started here 6 hours ago and think it finished in the last hour.

So it took 4 or 5 hours I guess on a 100mbit line.

well. i like kiss, too, but compiled massdns for the pi: it grabs 100k domains in 1min on a 10mbit line. just give it a try its quite simple, too.

note: we should spend more time in condensing blacklists and transfer to wildcard when possible, than cummulating just a lot of domains. my 2 ct.

I know now what I wanted to know

But always nice to know a more efficient way exists.

sorry, but at least the result should be the same. so, call it draw.

AAAA records:

while read DOMAIN; do (host -t aaaa $DOMAIN 8.8.8.8 1> /dev/null) || echo $DOMAIN | tee -a list.no.aaaa.too; done < list.failed

$ wc -l list.no.aaaa.too

17039 list.no.aaaa.toostill 16% waste of space/searchtime in dnsmasq. and, i think the basis list are quite well curated.

btw: what is the content of this files? they are not host files.

eg

0.0.0.0 sally.example.com"w

0.0.0.0 "+e.text

The Pihole logs pick up these when running a Yacy Search Engine crawling a site.

https://yacy.net

Also sometimes some websites have the ".local" as well not too sure what that means.

I have released a program in QB64 to extract your own logs for such invalid DNS names https://smokingwheels.github.io/qb64/piholelogext.bas.

If you want to use OneDrive please WhiteList these.

Highlight right to left and Right click and copy.

Text format for copy and paste of lists.

https://smokingwheels.github.io/Pi-hole/

Updated from https://zerodot1.github.io/CoinBlockerLists/

Thank you to ZeroDot1 and all those who contribute to it.

Instagram is also blocked. I think thats not necessary ;D

instragram.com

www.instragram.com

Are unlisted now.

Lists updated

Github has hard limit of 100mb per file and a warning at 50 mb. I had a 150 mb file sneek in.

Text format of all blocklists.

Note: The yacysearchengine one blocks google

I know you said "yacysearchengine " blocks google but I have that one unchecked and google is still being blocked. How do I remove that list all together? I cant figure out which file is blocking google.